Integrating Google Analytics with Google Ads

Learn how to effectively integrate Google Analytics with Google Ads for better tracking and optimization of your campaigns.

Published: 2024-08-09

By: Michael Mares

P-values are misunderstood, abused, and not made for digital marketing.

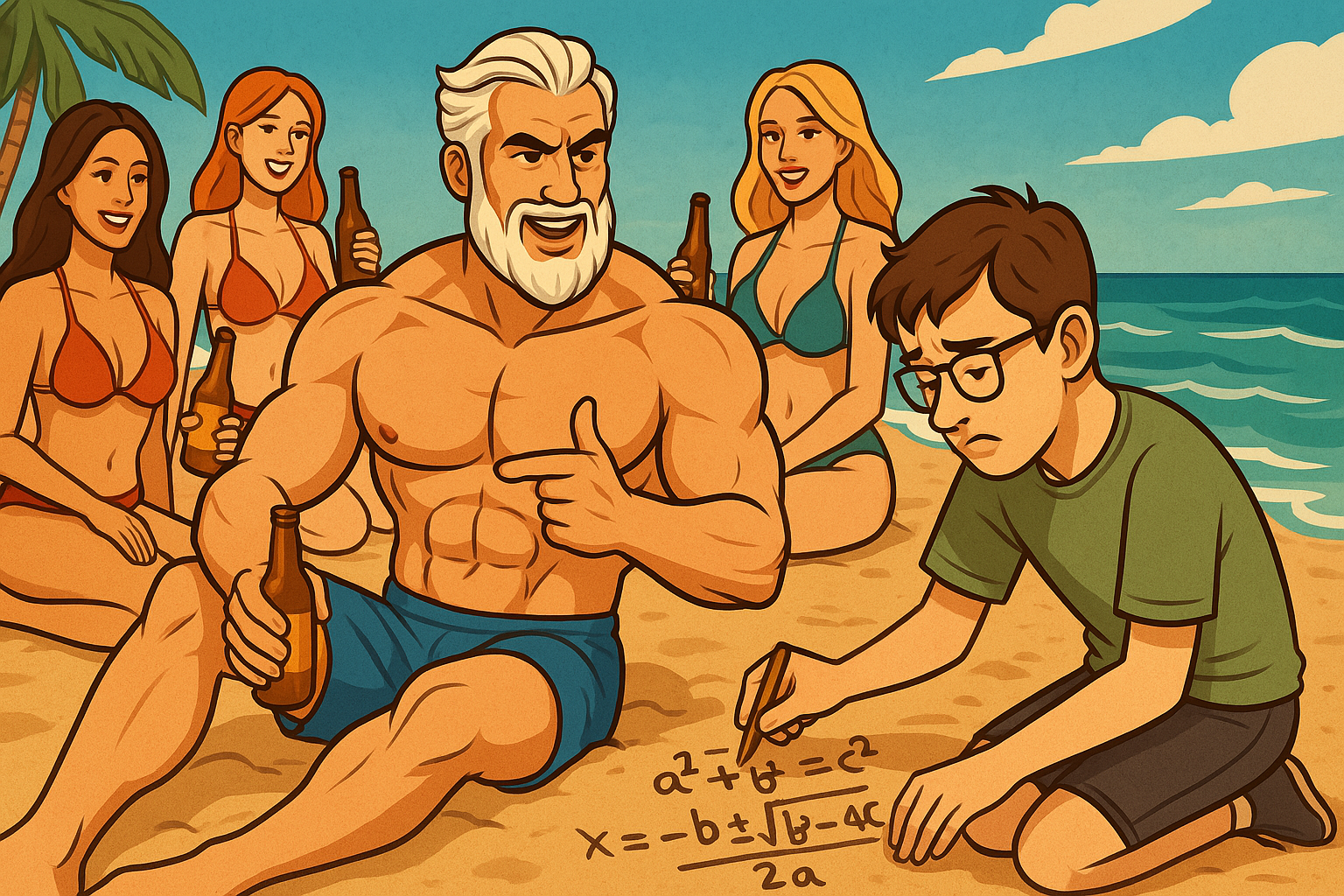

Let’s say you’re running an A/B test on your landing page. Variant A is the original. Variant B is your new brain child, crafted after a brainstorm fueled by cold brew and marketing memes.

You launch the test, collect data, and run a t-test.

The result?

A p-value of 0.04. You celebrate. Statistically significant! The team huddles to launch variant B across the board.

Three weeks later, conversions are down. What happened?

You got p-valued.

A p-value tells you the probability of observing data as extreme as yours, assuming the null hypothesis is true.

That’s not the same as saying “there’s a 96% chance B is better.” It’s more like, “if B weren’t better, this result would only happen 4% of the time.”

See the issue?

Digital marketers often mistake p-values for this second thing, and it’s easy to see why. “Statistically significant” sounds like “actually better.” But it’s not.

Also:

And that’s not even the worst part…

In the fast-paced world of paid search and landing pages, you’re constantly testing.

Which means:

That’s p-hacking.

And it inflates your false positive rate faster than a Black Friday CTR.

The more you peek, the more likely you’ll find “significance” by chance alone. You’re not discovering gold — you’re finding fool’s gold, statistically speaking.

So what’s the alternative?

Bayesian probability flips the question:

This is exactly what you thought p-values told you.

A Bayesian A/B test will output something like:

There’s a 91% probability that variant B has a higher conversion rate than variant A.

That’s a number your marketing team, your boss, and your grandma can understand. No mental gymnastics required.

Bonus? Bayesian methods handle:

Bonus? Bayesian methods handle:

Imagine two variants:

You run a Bayesian test and get:

There’s an 87% probability B is better, with a 95% credible interval for the difference between 0.2% and 1.8%.

That’s enough to say:

Compare that to a p-value test that says:

p = 0.07 — not significant.

So you do… nothing. And miss out on a possible uplift.

Learn how to effectively integrate Google Analytics with Google Ads for better tracking and optimization of your campaigns.

Learn how to effectively target and segment your audience in Google Ads to improve campaign performance and ROI.

Explore advanced automation techniques for Google Ads to scale your campaigns and improve efficiency.

Learn the fundamentals of Google Ads automation and how to implement basic automation rules for better campaign management.

Maximize your Google Ads ROI with this automated budget allocation script that dynamically shifts budget from underperforming to high-performing campaigns.

Learn advanced strategies for managing your Google Ads budget effectively to maximize ROI and campaign performance.